Cognitive science's gift to the artificial intelligence development is, by no doubt, cognitive modelling. In short, cognitive modelling is about coming up with some sort of a mathematical or computational model of how the brain operates. These models can then be used in various AI tasks to solve issues the human brain excels at. In this post we will go through three different ways to do cognitive modelling. 😊

Computationalism

Computationalism sates that the mind or cognition is nothing but computations taking place in the brain. These computations are rather mechanical rule-based operations done with symbols. These operations don't require understanding of the meaning of the symbols. The computations taking place in the brain can be different in different kinds of brain architectures (for reference see identity theories).

The extreme form of computationalism states that the fundamental requirement for anything intelligent is that the intelligent system (such as the brain) can manipulate symbols. What these symbols mean, i.e. their semantics, derives from the connections between the symbols inside of the system. The limits of the computational capabilities are seen as the limits of the mind itself. This, however, causes problems such as how to explain quale and how to really explain meaning of the symbols. If the meaning of the symbols is based on their connections, then how can this castle built in thin air ever be connected to the real world? 🤔

For reference, take a look at the Chinese room argument that goes against computationalism.

Connectionisim

Where as computationalism relies on rule-based logic, connectionism uses probabilities. Connectionism has its foundation on neural modelling which is again an abstraction of how physical neurons function in the brain. The brain is a huge network of interconnected neurons, each of which are specialized in a very particular task such as recognizing Jennifer Aniston. Together, these neurons can do much more complex operations and furthermore, they can learn. Learining is a key thing here. A rule-based system can't go too far with learning, but a neural system is by design meant to learn new things. The quote every neuroscientist knows is neurons that fire together, wire together and that in my opinion is a nice way to put the complexity of learning in layman's terms. 😃

Now that we are in the deep waters of neural modelling, you might ask how does this all work? If you go to more advanced neural networks such as the ones used in deep learning, you will get puzzled easily. But hold on, the main principle is relatively simple. A neural network will always consist of neurons, so if we manage to understand how one individual neuron operates by itself, we can sort of understand how it will work in a network and thus we gain the basic understanding of deep learning. 😊

Perceptron

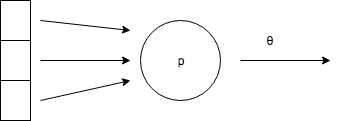

Perceptron is a simple one neuron model that we are looking into now to understand the basics behind neural networks. Perceptron takes an input, and produces an output if the sum of the inputs goes above its threshold value.

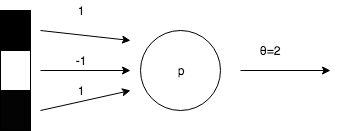

The circle with the P in it is the perceptron. The arrows pointing towards it come from the possible input and the arrow with the θ is the output. The θ will indicate the threshold after which the perceptron will fire. So, let's add an input to the perceptron and adjust the values so that the perceptron will react to a colon (:), but nothing else.

The values coming from the input arrows indicate the value that will be given to the perceptron from the arrow if the box it points from is black. No value will be given if it's white. In neuroscience, this value is known as activation potential. Then we just have to sum the activation potentials together to see if the perceptron will fire, i.e. their sum will reach the value indicated by θ. You can do the math, because the arrow in the middle has the value -1 assigned to it, there's only one combination of the inputs that will make the perceptron fire. Thus this perceptron works spot on in detecting colons. 👍🏻

I promised you that these models can learn, and that's also true in the case of a perceptron. There's an algorithm to make a perceptron learn to solve the task automatically, but I won't cover it here. Now when you think of a neural network, it's just a multitude of perceptron-like neurons that are connected to each other and deliver activation potentials inside of the network depending on if they fire or not. 🔥

Dynamical systems

Computationalism and connectionism both take an input and produce an output based on rules or a neural network, which of course, is useful in logical reasoning or problem solving. But what if we want to model physical movement? The brain has to go through a lot of computations so that we can walk and hold our balance effortlessly. In such a case, we don't really have a singular input that should map to a certain output. The dynamical perspective to cognitive modelling looks into how we can get from the current state to the next one; such as getting from holding my left foot 3 cm from the ground to holding my left foot 4 cm from the ground, etc. up until I have taken a full step. 👞

An example would be the domino effect. There no individual piece has a plan which it executes but it rather just reacts to its environment.

Conclusions

Different points of view to cognitive modelling serve different purposes. All of them are interested in information processing in the brain, and thus cognitive science is at the core of AI development. Not all AIs, however, try to simulate the human brain such as those used in video games. However, many real world AI problems use an approach based on the concepts of cognitive modelling. Now that we have gone through this information, a question might pop up: can a computer develop a human like consciousness? 😊